Rhys' Newsletter #66

Grace Lindsay on computational neuroscience, a network-native social contract, and is the 21st century as the most important century ever?

This newsletter goes out to more than 1,000 ambitious frontier people. Please share it with a friend who would like it. (And welcome to all the folks who joined this week!)

Hello!

This is my last newsletter before I go on a 150-mile charity walk with my dad and brother this August. See you back here in September.

New Podcast of the Week

#89 Grace Lindsay: Your Brain Is Math

Apple Podcasts | Google Podcasts | Spotify

Grace is a computational neuroscientist who recently published the book, Models of the Mind: How Physics, Engineering and Mathematics Have Shaped Our Understanding of the Brain.

We chatted about mathematical models of the mind and how that informs the kinds of information that can live in there.

Psychology and neuroscience tell us what the brain does. Computational neuroscience tells us how the brain works.

I found the book (and convo) extremely helpful for understanding how physics, computer science, network theory, information theory, and Bayesian probability theory have given us tools to understand the brain.

Highlights from our convo:

Interdisciplinary Multi-Level Explanations

Rhys: Can you explain how models of the mind come from different scientific fields?

Grace: I'm interested in building models that can synthesize a bunch of experimental data and provide a mechanism to explain them. A lot of the mathematical models that I talk about are in that category.

A neuron works the same way as an electrical circuit. You can take the equations from electrical engineering and use them to describe how a neuron takes an input and produces its output.

Then you have other models that try to explain how populations of neurons interact. Those pull from, for example, physics, which models how particles in a gas or a fluid interact. You can use those equations to model the interactions between neuron populations.

You can get more high level and look at things like the structure of the brain using graph theory or network science to understand the relationship between structure and function in the brain.

There isn't a single model that I'm proposing or advocating for. The brain is made up of so many different parts and can be dissected and studied in so many different ways.

Rhys: Yeah, I love that. There’s a skill that you have, multi-level explanations. Sometimes you’re talking about individual neurons, sometimes groups of neurons, sometimes the structure of neurons, and sometimes the expressed human behavior.

What Information Wants

Rhys: How should we think about the kinds of information that can be stored in the brain? Or, put differently, what kinds of info are “fit” to be stored in the mind?

Grace: There's a trend in neuroscience to use ethologically relevant tests in animals. Right now you take a mouse or a monkey, put it in front of a screen, show it basic lines and shapes, and have it do some task with that info. It's not what the animal is used to doing in its evolutionary niche, it's just not.

Instead, we are beginning to be more ethologically relevant. Doing tests on things that animals would actually do in the wild.

So I think any answer to that question would have to, you know, specify whose brain, or at least what species’ brain are we talking about.

For humans specifically: if you have people learn a complex graph, they will traverse it as though they’re walking through a house.

That suggests some kind of constraints on our thoughts. 3-dimensional space is how we have to think. Things in that form will be easiest for us to process. There are some video games that try to teach people to navigate a 4-dimensional space. It's really trippy and it takes a lot of practice.

To determine What Information Wants, we need to understand that its home (the brain) has evolved over millions of years to be fit for certain things (reproduction in a 3D world). Information wants to be “fit” with that reality.

Lots more in the podcast itself! On rate-based coding, small world hypothesis in neurons, how inspecting artificial neurons will help us inspect real neurons, and more.

Thanks Grace!

LINKS

1) How should an influencer sound? YouTube voice is coming for us all.

The medium is the message. Youtube and TikTok mediums have determined what a “Youtuber voice” sounds like. TikTok is similar, just faster.

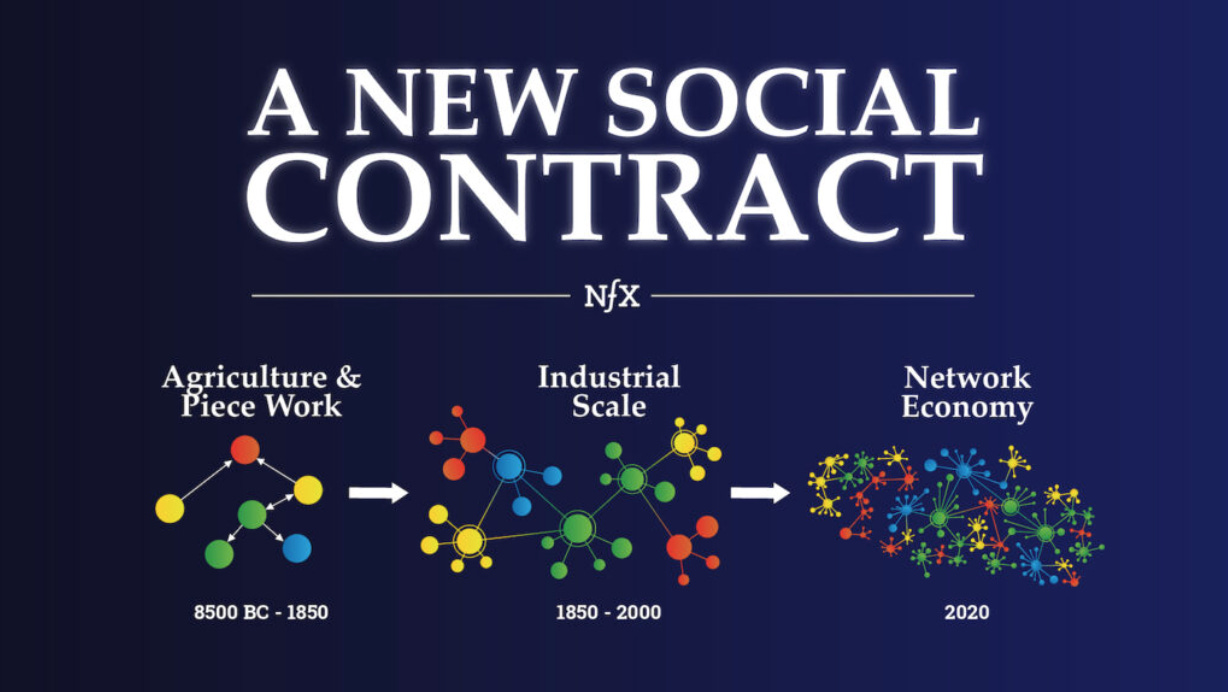

2) Network Effects Demand A New Social Contract

I don’t agree with everything James writes here. But I do think it’s important to think through a network-native social contract.

As futurist George Dyson says, with more than a little awe, “At some point in every planet’s history, it gets wired up and connected into a whole, just once. We have been alive at that time.”

3) Holden Karnofsky of Open Philanthropy has a new blog, Cold Takes. His first post is All Possible Views About Humanity's Future Are Wild.

In a similar vein as the Dyson quote above:

There's a decent chance that we live at the very beginning of the tiny sliver of time during which the galaxy goes from nearly lifeless to largely populated. That out of a staggering number of persons who will ever exist, we're among the first. And that out of hundreds of billions of stars in our galaxy, ours will produce the beings that fill it.

So when I look up at the vast expanse of space, I don't think to myself, "Ah, in the end none of this matters." I think: "Well, some of what we do probably doesn't matter. But some of what we do might matter more than anything ever will again. ...It would be really good if we could keep our eye on the ball. ...[gulp]"

Eep! The next century may be super impactful. Or maybe not! See this counterargument: Are we living at the most influential time in history?

4) Gates’ Breakthrough Energy Catalyst is a good example of converting long-term impact (early investment translating into decarbonizing through Wright's Law) into short-term signals (dashboard to present the predicted impact).

5) Babylon Bee: Scientists Warn That Within 6 Months Humanity Will Run Out Of Things To Call Racist

6) The Onion: Company Struggling To Find Diverse Leadership Candidates Among CEO’s Golf Buddies

7) Rhys: Priscilla Chan Divorces Zuck After Three-Way Affair With Siri And Alexa

8) Satire From The Crowd: Leaked Deleted Scene from Fast & Furious 9 is Just Vin Diesel Changing a Tire for 12 Minutes

(Please send me other funny headlines you’ve written!)

9) TikTok of the Week: @raquellily on why Filipino is an ugly language. (BGC Drama Effect provides a great TikTok meme template.)

JOBS AND OPPORTUNITIES

Paradigm is looking for folks to join their (awesome) research team.

On of my top three favorite newsletters, Vox’s Future Perfect, is hiring three writing fellows.

Create a math explainer video and submit it to 3blue1brown’s contest.

Learn to build on Ethereum this August with ETHSummer.

Mariana Mazzucato’s team is hiring research consultants for the UN’s Economic Health For All initiative. Apply by July 14th.

Syndicate DAO is a cool DAO that is decreasing syndicate costs by 100x. They’re hiring for a bunch of roles here.

EVENTS

EthCC is back! Paris, July 20-22, 2021.

Effective Altruist Events Calendar (recurring)

Interintellect Salons (recurring)

The Stoa (recurring)

MUSIC

I’m a pretty big fan of musicians who win MacArthur fellowships. Rhiannon Giddens is a bluegrass singer and banjo player who won a MacArthur in 2017.

She’s so damn good. Before she began producing bluegrass music, she won various competitions for Gaelic lilting singing. See Mouth Music below (and acapella music from newsletter #56).

She was good before she won a MacArthur. But honestly, she’s been better after it. Her two latest albums have been off-the-charts good.

There Is No Other (2019) was excellent. It has the best rendition of Wayfaring Stranger that I know, plus songs that display her amazing singing and string talent at the same time, like Pizzica di San Vito.

Her newest album, They’re Calling Me Home, came out in April of 2021. My favorite song is Avalon. The music video has delightfully playful dancing too.

In her MacArthur video, Rhiannon talks about how the banjo started in Black slave culture but was appropriated (slash appreciated) by white folks. Makes me think of Ganstagrass, the only hip-hop + bluegrass band I know.

Hope you have a good week! Warmth, Rhys

If you like this newsletter, check out the online community of systems thinkers that I helped co-found, Roote.

❤️ Thanks to my generous patrons ❤️

Maciej Olpinski, Jonathan Washburn, Ben Wilcox, Audra Jacobi, Sam Jonas, Patrick Walker, Shira Frank, David Hanna, Benjamin Bratton, Michael Groeneman, Haseeb Qureshi, Jim Rutt, Zoe Harris, David Ernst, Brian Crain, Matt Lindmark, Colin Wielga, Malcolm Ocean, John Lindmark, Collin Brown, Ref Lindmark, James Waugh, Mark Moore, Matt Daley, Peter Rogers, Darrell Duane, Denise Beighley, Scott Levi, Harry Lindmark, Simon de la Rouviere, and Katie Powell.